We spent most of the day yesterday in Amsterdam, trying out a new iPhone eye-tracking setup.

The friendly usability experts from valsplat contacted us a while ago, to see if we wanted to participate and test one of our iPhone games with eye-tracking. The goal would be to gain insight into player behavior by analyzing what the players’ eyes were looking at during gameplay. Excited about this opportunity, we set off for their offices in the center of Amsterdam.

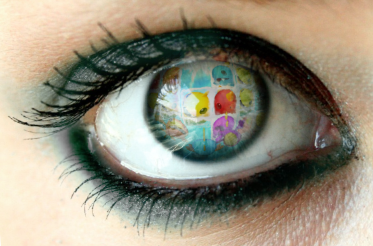

Normally eye-tracking is done by placing someone in front of a monitor, showing the game or website to be tested, with the eye-tracking camera integrated into the casing. The output is a little red dot moving around the screen, based on where someone is looking. For iPhone eye-tracking this is not an option because you cannot stuff a camera into it’s casing, so they came up with an alternative setup.

Instead of explaining it, we’ll show it to you below.

Thanks for this Martijn, lovely as always!

So in addition to the screen and eye-tracking camera, they mounted an additional camera, focused on the player’s hands and fed that back to the monitor with the eye-tracking camera.

Still with me? Good!

We tried the system on eight test subjects - GLaDOS would be proud, although we did not kill any of them - with wildly varying results. The best ones acted as if it was business as usual, while one of them got a head-ache during the hour-long test. I’m inclined to attribute it to the small latency between moving and having the image on the monitor update, combined with the fact that you lose the depth information you’d normally have when looking straight at your hands.

We noticed some people performing better while looking straight at their hands, but difficulty testing is not really the point of using eye-tracking as you can easily do that by gathering statistics. With this setup, even the guy who ended up with a headache - sorry about that - provided us with valuable information.

It’s truly amazing - and a bit creepy - to see what someone else is looking at with such precision. We could for instance see how long someone took to read each word of the tutorial screens. We could see at which moment someone first took notice of the HUD next to the play-field. We could see if our red warning glow was annoying enough for players to take notice, and if they did, whether they were constantly distracted by it or not.

It’s not working for everyone yet, but seeing as we made tweaks that improved the test-results even during the course of the day, we’re pretty confident they’ll be able to get a great working setup for eye-tracking on iPhone.